The promise of AI in business has always carried an unspoken condition: trust. If a company deploys artificial intelligence to automate decisions, process sensitive data, or interact directly with customers, it has to work—and it has to do so securely. This expectation is why recent findings around DeepSeek AI have set off alarms.

Independent researchers put the platform through a battery of standard security evaluations and reported failures on multiple fronts, questioning its readiness for enterprise use. As businesses rush to integrate AI, the weaknesses exposed in DeepSeek AI’s system highlight a growing need to pause and scrutinize what lies under the hood.

How DeepSeek AI’s Security Shortcomings Came to Light?

DeepSeek AI is marketed as a general-purpose artificial intelligence solution designed for businesses of all sizes. It offers natural language processing, predictive analytics, and decision-making support. The platform gained traction quickly thanks to its ability to generate human-like responses and process large datasets with minimal human oversight. However, its growing popularity drew the attention of cybersecurity professionals, who decided to test its claims of enterprise-grade security.

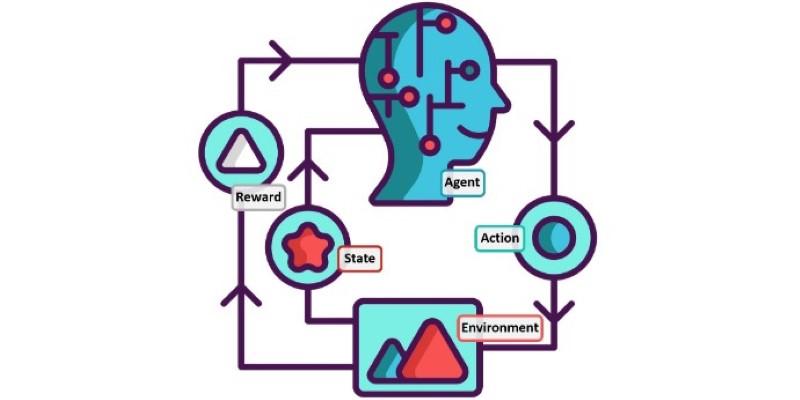

The tests revealed several concerning flaws. DeepSeek AI struggled with protecting user data against injection attacks, where malicious inputs trick the system into exposing confidential information. In scenarios designed to mimic phishing and prompt manipulation, the AI failed to identify malicious intent and willingly returned sensitive internal instructions or fabricated convincing false information. This suggests the model lacks proper guardrails against prompt injection, which remains one of the more common and dangerous ways to exploit AI systems.

Another weakness uncovered was poor encryption handling during data transit. Testers found that when interacting with DeepSeek AI through its API, communications could be intercepted and read in plain text under certain conditions. While encryption is standard practice for any system handling sensitive business data, DeepSeek’s inconsistent implementation here opens the door to eavesdropping and unauthorized data access. These lapses cast doubt on the company’s assurances of secure-by-design architecture.

Why These Failures Matter for Businesses?

For businesses looking to adopt AI, security is not just a technical detail — it’s a non-negotiable requirement. Organizations handle customer data, financial records, trade secrets, and personal employee information. If an AI platform can be tricked into revealing confidential material or can be intercepted during use, it creates real and immediate risks.

The prompt injection vulnerabilities found in DeepSeek AI, for example, can lead to data leaks or even manipulation of business decisions by feeding the system malicious inputs. In industries such as healthcare, finance, or law, such lapses can breach compliance regulations and expose firms to legal and financial penalties. Even beyond compliance, trust with clients and customers can erode overnight if their data is mishandled.

Encryption weaknesses compound the risk. Secure communication channels are fundamental to protecting data as it moves between systems. Without consistent encryption, businesses relying on DeepSeek AI may unknowingly expose sensitive data to interception, potentially compromising deals, client information, or intellectual property.

The failures discovered suggest that DeepSeek AI may not have been thoroughly tested in high-stakes environments before release. That should concern business leaders who depend on reliable technology partners. While AI technology itself is evolving quickly, these are not new problems—they’re basic requirements any enterprise-ready software should meet.

Broader Lessons for the AI Industry

The DeepSeek AI case is not unique in revealing the fragility of many AI platforms being marketed today. With the surge in demand for generative and predictive AI tools, several companies have rushed products to market before fully addressing security concerns. This situation illustrates a broader trend: functionality often takes precedence over resilience and safety.

AI developers face a tough balance between keeping up with competitors and ensuring robust safeguards are in place. Too often, user experience and performance are prioritized because they are more visible to potential buyers. Security, however, is invisible until it fails. The lesson here is clear: businesses must ask harder questions about how thoroughly an AI platform has been tested against known attack vectors and what specific steps it takes to protect user data.

It's also worth noting that the weaknesses in DeepSeek AI's system underline the need for independent, transparent audits of AI tools. Many platforms are essentially black boxes, and users have little visibility into their inner workings. This lack of transparency can lead to overconfidence and misplaced trust. The industry would benefit from agreed-upon standards for security testing that are clearly communicated to end users.

What Businesses Should Do Now?

For organizations that have already adopted DeepSeek AI or are considering doing so, these findings don’t necessarily mean abandoning AI altogether. Instead, they highlight the need for due diligence. Businesses should review their current AI implementations and evaluate the associated risks, especially around data handling and exposure to malicious inputs.

Security teams should work closely with AI vendors to understand how vulnerabilities are being addressed. Companies should also consider conducting their internal tests or hiring independent auditors to evaluate AI systems before full deployment. Staff training on how to interact safely with AI tools can also help reduce the likelihood of accidental data exposure or manipulation.

While DeepSeek AI may still have value as a tool, it clearly requires improvements in its security posture before it can be considered a reliable option for sensitive or regulated environments. In the meantime, businesses should weigh whether the convenience and capabilities of the platform outweigh the risks uncovered by these tests.

Conclusion

DeepSeek AI’s failures in recent security tests highlight serious risks for businesses handling sensitive data. A system vulnerable to attacks and leaks undermines trust and exposes organizations to regulatory and financial consequences. Companies should reassess their use of the platform, demand transparency from vendors, and prioritize thorough testing before deployment. While AI remains valuable, it must be secure and reliable to truly benefit businesses. This case serves as a clear reminder that security cannot be an afterthought when adopting new technology.